Professor

ProfessorPSA: LM wipes good known properties when unknown results occur

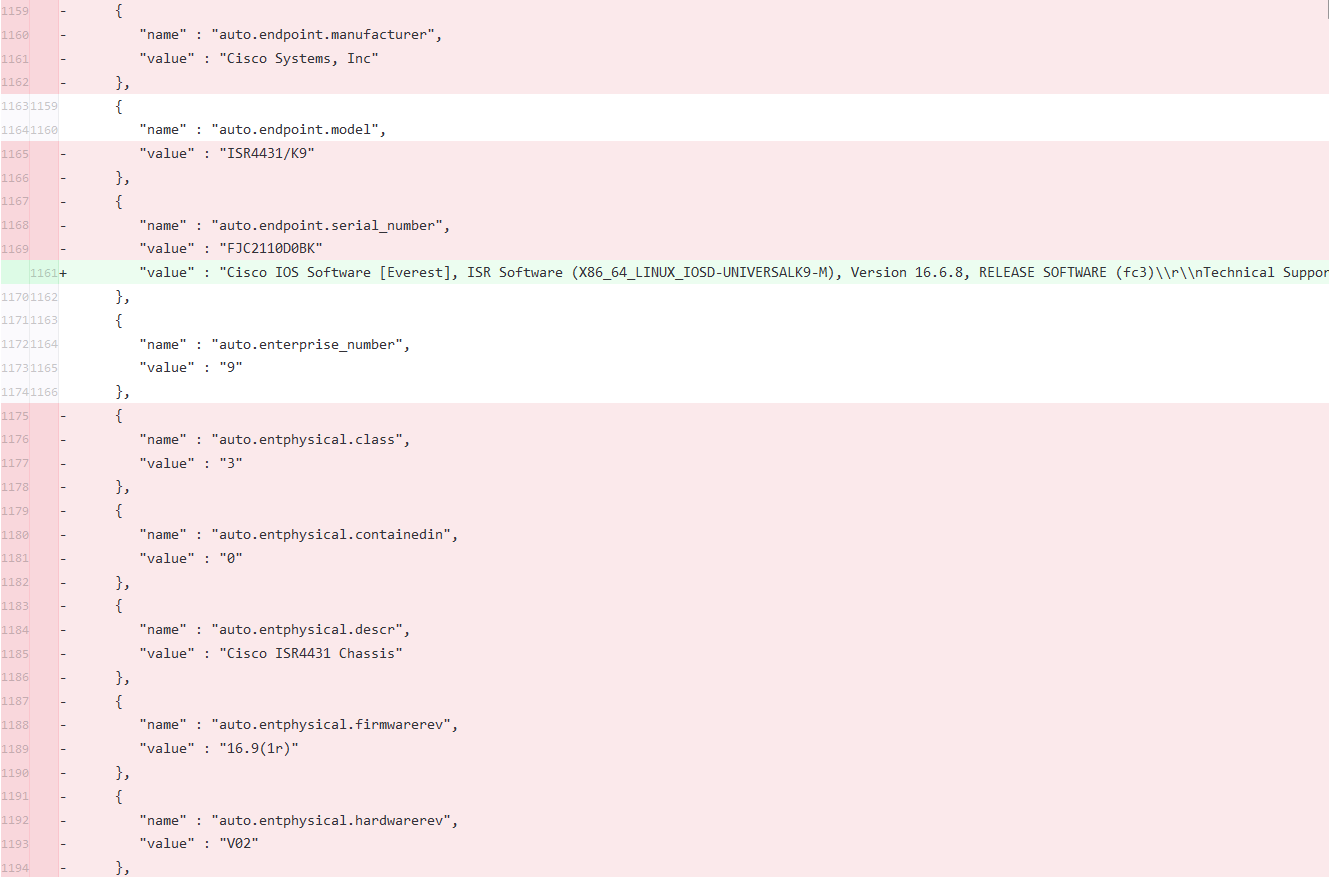

I have recently found that due to the excellent programming skills in the dev team that properties that have previously been autodiscovered can be wiped out when ephemeral issues produce unknown (no data) results. A good example is system.ips -- if the data has been scanned properly in the past and a blip occurs with no data, the previous values get overwritten with just the configured IP of the device. That leads to various fun side effects like NetFlow data not being matched to the device. To make things worse, the “no data” result does not set an internal flag to run a new AD scan earlier and you have to wait up to 24 hours for a regularly scheduled scan.

I created a bug ticket requesting they set that flag and run a new scan as soon as possible, but was basically told to pound sand. My workaround was to use an undocumented API endpoint to trigger on specified devices so I stop losing NetFlow data and I scheduled it hourly. The “solution” I was given was to add a netflow property to hardcode the needed IP address for each device -- works, but it is a brittle fix and leads to undesirable manual property management. Beyond that, this issue affects more than NetFlow, that was just the problem that lead me to realize what was happening. Other properties routinely get messed up that could affect processing.

This class of problem (replacing good data with unknown data) frequently occurs in modules as well -- for example, a lot of the Powershell configsource modules lack sufficient error checking and unknown results replace previously known good results, leading to change thrashing. Or they often forget to sort/normalize output leading to similar effects. The good news on those is they usually (eventually) listen to me.

Anyone who wants to use my workaround can use this script (or at least the central logic if you prefer something other than Perl). I still lose data, but the window is smaller.

https://github.com/willingminds/lmapi-scripts/blob/master/lm-action